A memory snapshot could explain why an app does not behave well. The process of collecting memory snapshot is straightforward locally:

- Download ProcDump

- Find the process ID in Task Manager

- Trigger onDemand collection:

procdump.exe -accepteula -ma 123

Why that does not work in cloud?

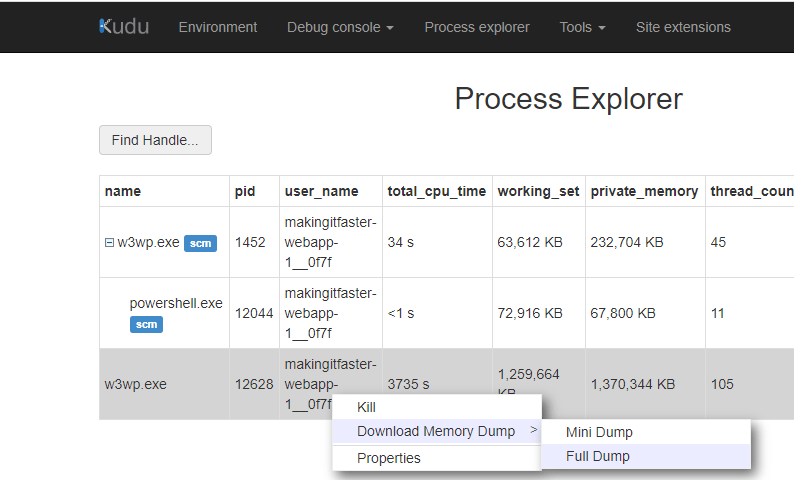

Despite KUDU can generate memory snapshots on demand, it frequently fails in real life:

The operation attempts to capture a few GB in size file, as well as download it by plain HTTPS protocol; full halt in case a connection timeout / bad network weather bubbles up.

How to do it better?

Azure WebApps already arrive with sysinternals tools, including ProcDump; therefore command-line collection looks possible with the help of Kudu console.

Resulting file would land on the file system and can be downloaded any later time.

Collection time

Lets copy ProcDump into the local\temp folder as snapshots would be saved there:

D:\devtools\sysinternals> copy-item .\procdump.exe -destination D:\local\TempProcDump was copied successfully:

D:\local\Temp> ls

Next step is to find process ID we’d like to make a snapshot of (12628 in our case):

Time to trigger collection with accepteula flag 😉

The resulting file is close to 2 GB in size. Since 7Zip is included into OOB WebApp, we could optionally archive the snapshot:

The compressed file is ~310MB. Still might be too much for HTTPs.

FTP to transfer large files

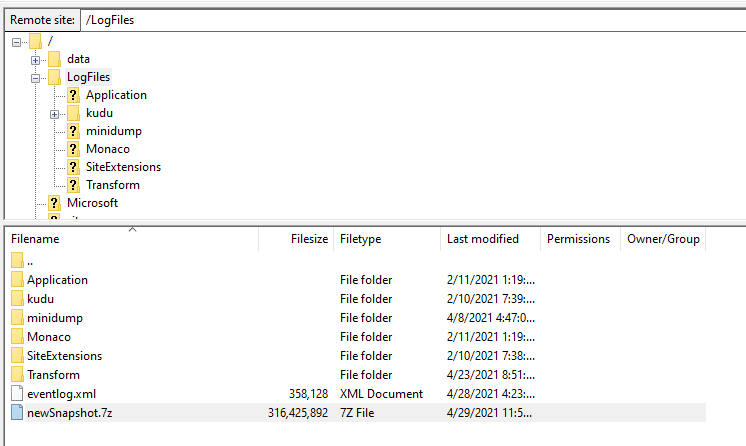

D:\home is already exposed for WebApp deployments via FTP, copy files there:

The publishing profile contains FTP credentials to use in order to reach file system:

Upon connecting we see the snapshot is waiting to be downloaded on a high speed:

Summary

We’ve triggered memory dump collection on WebApp side using software already installed in WebApp. The resulting file can be optionally archived. The fastest way to download snapshot would be to use FTP.