Performance Engineering of Software Systems starts with a simple math task to solve.

Optimizations done during lecture make code 53 292 times faster:

How faster can real-life software be without technology changes?

Looking at CPU report top methods – what to optimize

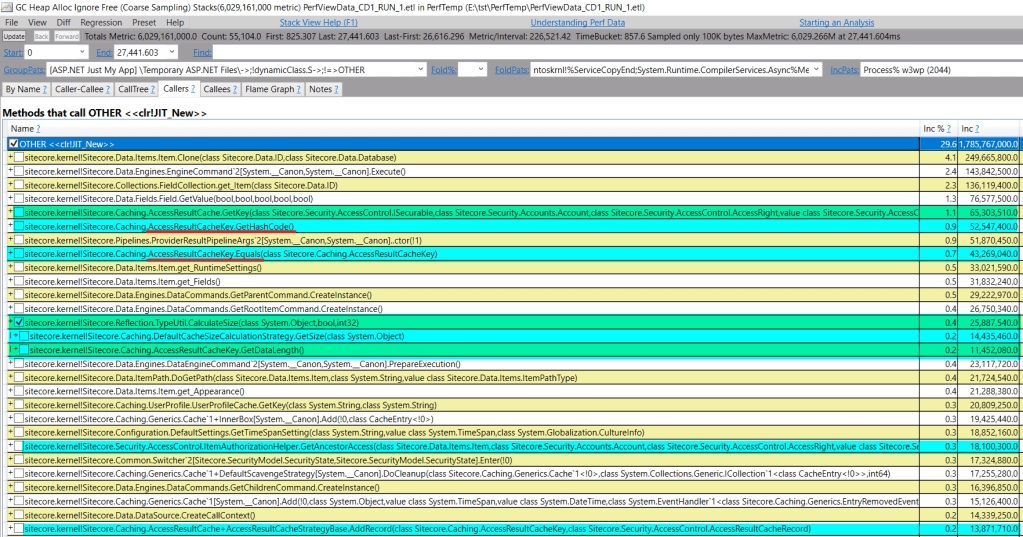

Looking at PerfView profiles to find CPU-intensive from real-life production system:

It turns out Hashtable lookup is most popular operation with 8% of CPU recorded.

No wonder as whole ASP.NET is build around it – mostly HttpContext.Items. Sitecore takes it to the next level as all Sitecore.Context-specific code is bound to the collection (like context site, user, database…).

The second place is devoted to memory allocations – the new operator. It is a result of a developer myth treating memory allocations as cheap hence empowering them to overuse it here and there. The sum of many small numbers turns into a big number with a second CPU consumer badge.

Concurrent dictionary lookups are half of allocation price on third place. Just think about it for a moment – looking at every cache is twice as fast as volume of allocations performed.

What objects are allocated often?

AccessResultCache is very visible on the view. It is responsible for answering the question – can user read/write/… item or not in fast manner:

AccessResultCache is responsible for at least 30% of lookups

Among over 150+ different caches in the Sitecore, only one is responsible for 30% of lookups. That could be either due to very frequent access, or heavy logic in GetHashCode and Equals to locate the value. [Spoiler: In our case both]

AccessResultCache causes many allocations

Why does Equals method allocate memory?

It is quite weird to see memory allocations in the cache key Equals that has to be designed to have fast equality check. That is a result of design omission causing boxing:

Since AccountType and PropagationType are enum-based, they have valueType nature. Whereas code attempts to compare them via object.Equals(obj, obj) signature leading to unnecessary boxing (memory allocations). This could have been eliminated by a == b syntax.

Why GetHashCode is CPU-heavy?

It is computed on each call without persisting the result.

What could be improved?

- Avoid boxing in

AccessResultCacheKey.Equals - Cache

AccessResultCacheKey.GetHashCode - Cache

AccessRight.GetHashCode

The main question is – how to measure the performance win from improvement?

- How to test on close-to live data (not dummy)?

- How to measure accurately the CPU and Memory usage?

Getting the live data from memory snapshot

Since memory snapshot has all the data application operates with, it can be fetched via ClrMD code into a file and used for benchmark:

private const string snapshot = @"E:\PerfTemp\w3wp_CD_DUMP_2.DMP";

private const string cacheKeyType = @"Sitecore.Caching.AccessResultCacheKey";

public const string storeTo = @"E:\PerfTemp\accessResult";

private static void SaveAccessResltToCache()

{

using (DataTarget dataTarget = DataTarget.LoadCrashDump(snapshot))

{

ClrInfo runtimeInfo = dataTarget.ClrVersions[0];

ClrRuntime runtime = runtimeInfo.CreateRuntime();

var cacheType = runtime.Heap.GetTypeByName(cacheKeyType);

var caches = from o in runtime.Heap.EnumerateObjects()

where o.Type?.MetadataToken == cacheType.MetadataToken

let accessRight = GetAccessRight(o)

where accessRight != null

let accountName = o.GetStringField("accountName")

let cacheable = o.GetField<bool>("cacheable")

let databaseName = o.GetStringField("databaseName")

let entityId = o.GetStringField("entityId")

let longId = o.GetStringField("longId")

let accountType = o.GetField<int>("<AccountType>k__BackingField")

let propagationType = o.GetField<int>("<PropagationType>k__BackingField")

select new Tuple<AccessResultCacheKey, string>(

new AccessResultCacheKey(accessRight, accountName, databaseName, entityId, longId)

{

Cacheable = cacheable,

AccountType = (AccountType)accountType,

PropagationType = (PropagationType)propagationType

}

, longId);

var allKeys = caches.ToArray();

var content = JsonConvert.SerializeObject(allKeys);

File.WriteAllText(storeTo, content);

}

}

private static AccessRight GetAccessRight(ClrObject source)

{

var o = source.GetObjectField("accessRight");

if (o.IsNull)

{

return null;

}

var name = o.GetStringField("_name");

return new AccessRight(name);

}

The next step is to bake a FasterAccessResultCacheKey that caches hashCode and avoids boxing:

public bool Equals(FasterAccessResultCacheKey obj)

{

if (obj == null) return false;

if (this == obj) return true;

if (string.Equals(obj.EntityId, EntityId) && string.Equals(obj.AccountName, AccountName)

&& obj.AccountType == AccountType && obj.AccessRight == AccessRight)

{

return obj.PropagationType == PropagationType;

}

return false;

}

+ Cache AccessRight hashCode

Benchmark.NET Time

[MemoryDiagnoser]

public class AccessResultCacheKeyTests

{

private const int N = 100 * 1000;

private readonly FasterAccessResultCacheKey[] OptimizedArray;

private readonly ConcurrentDictionary<FasterAccessResultCacheKey, int> OptimizedDictionary = new ConcurrentDictionary<FasterAccessResultCacheKey, int>();

private readonly Sitecore.Caching.AccessResultCacheKey[] StockArray;

private readonly ConcurrentDictionary<Sitecore.Caching.AccessResultCacheKey, int> StockDictionary = new ConcurrentDictionary<Sitecore.Caching.AccessResultCacheKey, int>();

public AccessResultCacheKeyTests()

{

var fileData = Program.ReadKeys();

StockArray = fileData.Select(e => e.Item1).ToArray();

OptimizedArray = (from pair in fileData

let a = pair.Item1

let longId = pair.Item2

select new FasterAccessResultCacheKey(a.AccessRight.Name, a.AccountName, a.DatabaseName, a.EntityId, longId, a.Cacheable, a.AccountType, a.PropagationType))

.ToArray();

for (int i = 0; i < StockArray.Length; i++)

{

var elem1 = StockArray[i];

StockDictionary[elem1] = i;

var elem2 = OptimizedArray[i];

OptimizedDictionary[elem2] = i;

}

}

[Benchmark]

public void StockAccessResultCacheKey()

{

for (int i = 0; i < N; i++)

{

var safe = i % StockArray.Length;

var key = StockArray[safe];

var restored = StockDictionary[key];

MainUtil.Nop(restored);

}

}

[Benchmark]

public void OptimizedCacheKey()

{

for (int i = 0; i < N; i++)

{

var safe = i % OptimizedArray.Length;

var key = OptimizedArray[safe];

var restored = OptimizedDictionary[key];

MainUtil.Nop(restored);

}

}

}

Twice as fast, three times less memory

Summary

It took a few hours to double the AccessResultCache performance.

Where there is a will, there is a way.

2 thoughts on “How much faster can it be?”